Robotics

Hiwonder

Hiwonder MentorPi M1 Raspberry Pi Robot Car – Mecanum wheel ROS2 Robot, with LLM ChatGPT, SLAM and AI Vision and Voice Interaction

【Raspberry Pi 5 & ROS2】Powered by RPi 5 and compatible with ROS2, MentorPi car is an ideal platform for developing AI robots. The system is fully programmable in Python, making it accessible for a wide range of projects.

【Choose Your Chassis】 Whether you need the precision of an Ackermann chassis or the maneuverability of Mecanum wheels, MentorPi raspi car has you covered. Its flexible design lets you switch between configurations to fit your unique needs.

【Top-Tier Hardware Inside】 Packed with high-performance components like closed-loop encoder motors, a TOF lidar, a 3D depth camera, and high-torque servos, MentorPi RPi robot delivers speed and accuracy.

【Advanced AI】 From SLAM mapping and path planning to multi-robot coordination and vision recognition, MentorPi robot supports a wide range of AI applications.

【Autonomous Driving】 Utilize YOLOv11 model training to enable advanced autonomous driving features, including road sign and traffic light recognition. This provides an excellent platform for learning and developing autonomous driving.

【Advanced Interaction】 Hiwonder MentorPi deploys multimodal models with ChatGPT at its core. By integrating a 3D vision system and an AI voice interaction box, the robot gains enhanced perception, reasoning, and actuation. This enables advanced embodied AI applications and delivers natural interaction.

Hiwonder

Hiwonder miniAuto AI Vision Robot Base on Arduino UNO R3 Controller with 360° Omnidirectional Mecanum Wheels, Supports Arduino Programming

【Compatible with Arduino】 Features an Arduino UNO R3 controller and an expansion board, ensuring full compatibility with the Arduino programming. Hiwonder miniAuto car also provides ample expansion ports for secondary development.

【Vision Recognition & Tracking】 Equipped with an ESP32-S3 vision module, miniAuto supports WiFi video transmission and enables applications such as vision line following, AI face recognition, and color tracking.

【360° Omnidirectional Movement】 With Mecanum wheels, miniAuto can move in any direction, supporting various motion modes to navigate complex surfaces effortlessly.

【Autonomous Driving】 With a 4-channel line follower and the vision module, miniAuto can perform line following, crossroad recognition, traffic light detection, and more autonomous driving capabilities.

【Robot Gripper Expansion】 This robotic gripper expansion enables object transportation, line following, visual transport, and numerous other creative projects, taking your creativity to the next level.

Hiwonder

Hiwonder MechDog Open Source AI Robot Dog with AI Vision & Voice Interaction, Programmable with Scratch, Arduino, and Python

【Driven by Coreless Servos】 Hiwonder MechDog is equipped with 8 high-speed coreless servos, providing exceptional torque, rapid response, robust force, and high accuracy. Its leg linkage structure enables swift, precise, agile and stable walking in any environment.

【Inverse Kinematics for Flexible Movement】 MechDog features built-in inverse kinematics that support real-time adjustments of walking direction, gait and posture, resulting in more flexible and lifelike movements.

【Cross-Platform Control with Multiple Programming Options】 Hiwonder MechDog supports control via PC software and a mobile app. It can be programmed using Python, Scratch, or Arduino, offering a variety of programming options.

【Extensive Expansion for Creativity】 MechDog can be enhanced with various sensors and electronic modules. It is also compatible with LEGO components, allowing for a broad range of creative applications.

Hiwonder

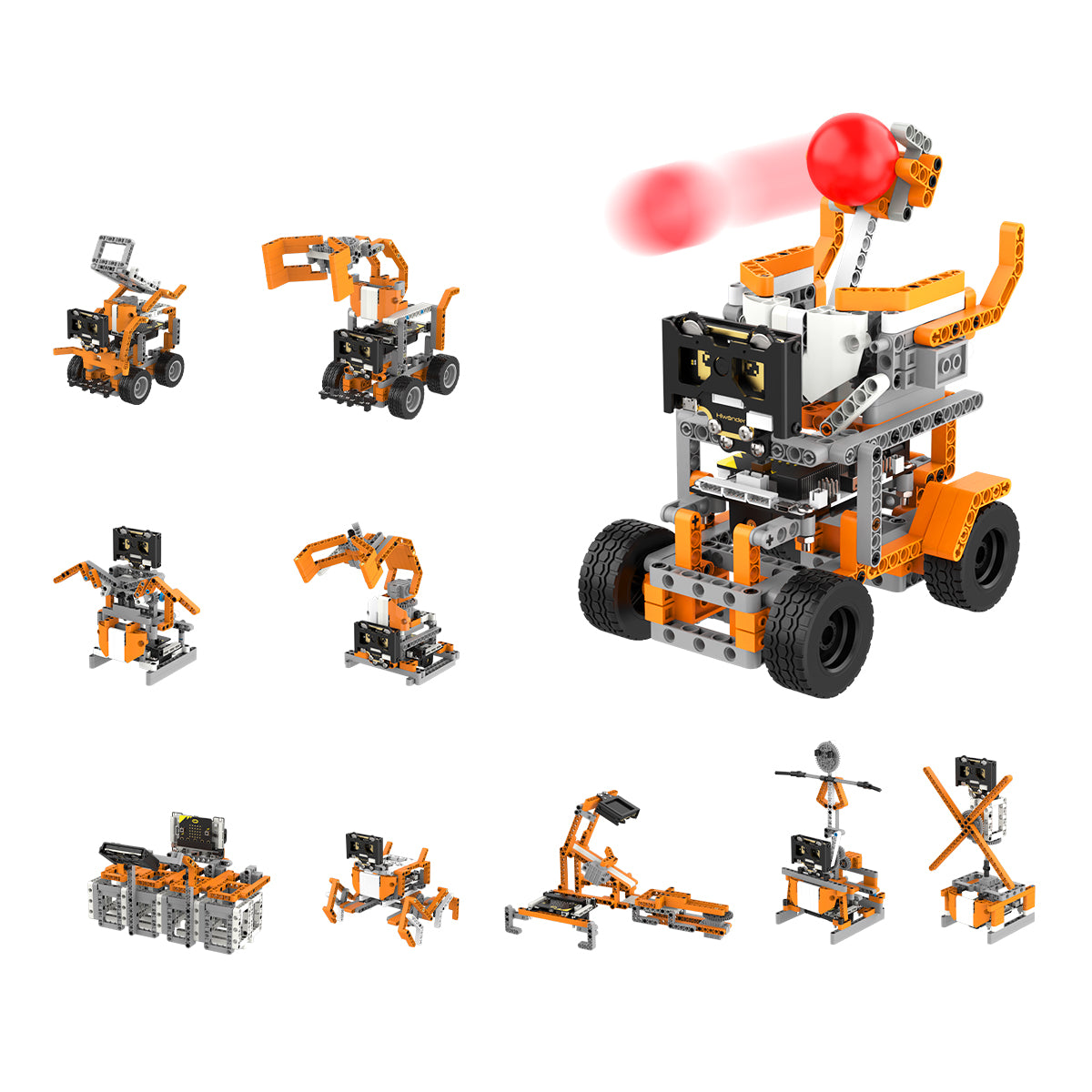

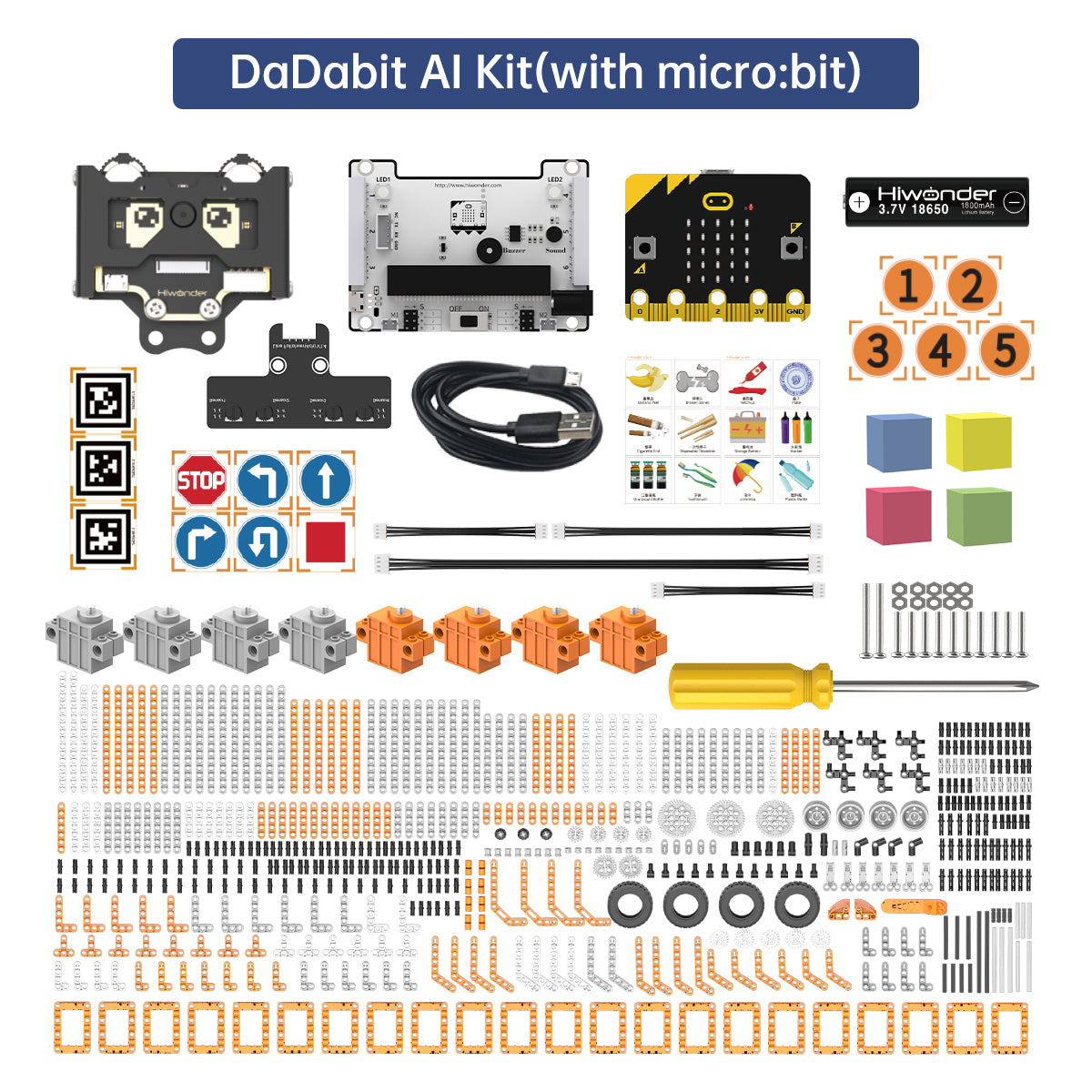

DaDa:bit AI Programmable Building Block Kit Powered by micro:bit with AI Vision Module Supports Sensor Expansion

- 【One-click Training and Learning】DaDa:bit AI features a high-performance WonderCam AI vision module with an integrated HD camera. DaDa:bit AI's AI vision module is integrated with some learning algorithms, allowing it to complete diverse AI vision projects such as color recognition and vision line following.

- 【Support micro:bit Programming】DaDa:bit AI is a programmable robot kit based on micro:bit, which uses micro:bit as the core controller. It is suitable for learning micro:bit programming. Drag and drop building block style modules for programming, which is easy to learn. Let children learn programming without any threshold and exercise logical thinking.

- 【Multiple Shapes Support Sensor Expansion】DaDa:bit AI has ten shapes, which can be matched with a variety of electronic modules and sensors. Combined with the WonderCam AI vision module, it can quickly realize a variety of creative gameplay.

【Arduino Programming, Open Source】 Hiwonder miniArm is built on Atmega328 platform and compatible with Arduino programming. With open source programs, detailed tutorials, and secondary development examples, miniArm is perfect for beginners to easily develop and program your robotic arm.

【High Performance Hardware, Support Sensor Expansion】 miniArm is equipped with a 6-channel knob controller, Bluetooth module, high-precision digital servos, and other hardwares. Moreover, it provides multiple expansion ports for sensor integration, including ESP32 Cam, accelerometer, touch sensor, glowy ultrasonic sensor, etc., empowering users to engage in secondary development for sonic ranging and pose control capabilities.

【Versatile Control Options】 Hiwonder miniArm supports app control, and users can utilize knob potentiometers for real-time knob control and offline action editing.

Hiwonder

Open-Source Robotic Hand AiHand Powered by micro:bit V2 Programming Educational Robot, Support WonderCam AI Vision Module

- 【micro:bit Programming, AI Vision Robotic Hand】AiHand is an open-source robotic hand powered by micro:bit. AiHand features a high-performance WonderCam AI vision module with an integrated HD camera. This module enables various AI applications, including color recognition, tag recognition, and more.

- 【Powerful hardware, Support Secondary Development】AiHand is equipped with an AI vision module and a glowy ultrasonic sensor. AiHand has multiple expansion interfaces reserved to facilitate users to carry out secondary development of the robotic hand.

- 【One-click Training and Learning】AiHand's AI vision module is integrated with some learning algorithms, allowing it to complete diverse AI vision projects such as waste sorting and tag recognition.

- 【Various Control Methods&Makecode Programming】AiHand supports Android/ iOS APP control, micro:bit control.AiHand also supports Makecode programming.Drag and drop building block-style modules for programming,which is easy to learn.

Hiwonder

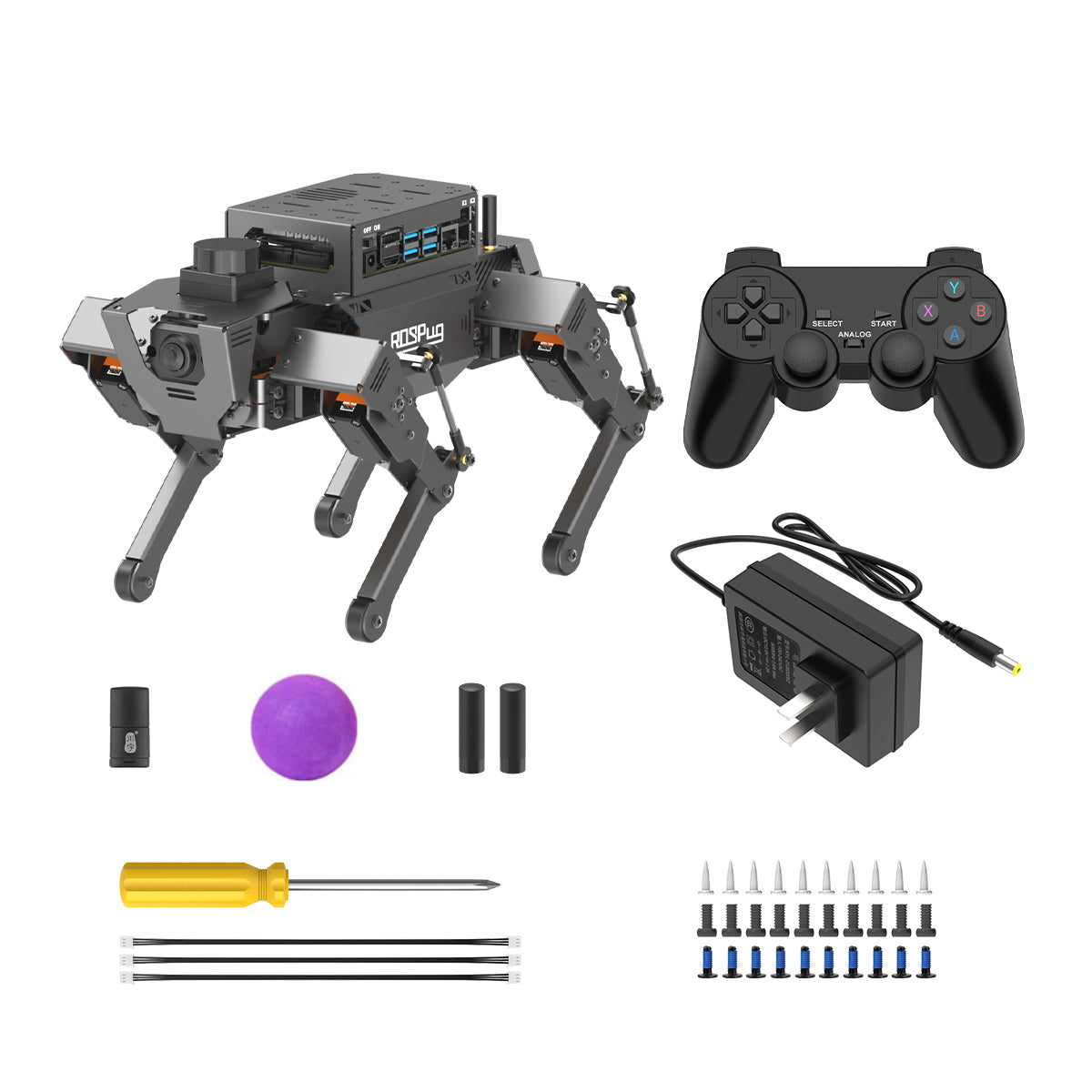

Hiwonder ROSPug Quadruped Bionic Robot Dog Powered by Jetson Nano ROS Open Source Python Programming

【Driven by Jetson Nano and high-voltage intelligent serial bus servos】 ROSPug is a smart quadruped robot dogdruped robot driven by Jetson Nano and built on the Robot Operating System (ROS). It is equipped with 12 high-voltage strong-magnetic intelligent serial bus servos, delivering high-precision performance, rapid rotation speed, and robust torque.

【AI Vision, Unlimited Creativity】 Hiwonder ROSPug is equipped with an HD wide-angle camera. It utilizes OpenCV library for efficient image processing, enabling a diverse range of AI applications, including target recognition, localization, line following, obstacle avoidance, face detection, ball shooting, color tracking and tag recognition.

【Various Control Methods and Live Camera Feed】 You can conveniently control ROSPug through WonderROS app available for Android and iOS devices, PC software, or a wireless PS2 handle. Additionally, ROSPug provides a FPV experience by transmitting the live camera feed to the app.

【Gait Planning, Free Adjustment】 ROSPug incorporates inverse kinematics algorithm offering precise control over the touch time, lift time, and lifted height of each leg. You can easily adjust these parameters to achieve different gaits, including ripple and trot. Additionally, ROSPug provides detailed analysis of inverse kinematics, along with the source code for the inverse kinematics function.

- The ROSPug's color was upgraded from black to gray in May 2025.

Hiwonder

Hiwonder uHand UNO Open Source AI Bionic Robot Hand Supports Somatosensory Control, Arduino Programming

【Arduino Programming, Open Source】 Hiwonder uHand UNO is built on the Atmega328 platform and compatible with Arduino programming. The uHand UNO programs are open-source. With detailed tutorials and example projects for secondary development, users can easily customize and expand their robotic hand.

【High Performance, Support Sensor Expansion】 uHand UNO features a 6-channel knob controller, Bluetooth module, six anti-blocking servos, and other high-performance hardwares. With multiple expansion ports supporting sensors like ESP32 Cam, accelerometer, touch sensor, and RGB ultrasonic sensor, users can easily perform secondary development to enable sonic ranging, Bluetooth communication, and pose control.

【Versatile Control Options】 uHand UNO supports both app control and wireless glove control. Users can utilize knob potentiometers for real-time knob control and offline action editing.

- 【High-quality AI Education Demonstration System】AiArm is a smart vision robot arm powered by a self-developed controller, CoreX. It adopts high-performance intelligent servos and vision modules and can be programmed in Scratch and Python. With its impressive capabilities, AiArm unlocks diverse AI applications, such as smart vision-guided recognition and grasping.

- 【AI Vision Recognition and Tracking】AiArm combines the WonderCam AI vision module to recognize and locate target objects, enabling the implementation of AI applications like color sorting and waste sorting.

- 【Different Motion Control Methods】Whether it's PC software control, offline control, or inverse kinematics control, you have the versatility to design and edit various actions, unlocking the full potential of the robot arm's capabilities.

- 【Multiple Sensor Expansion】Integrating with sensors and modules, AiArm can execute various interesting functions, including an AI face tracking fan, ultrasonic vision hunt.

Hiwonder

Hiwonder JetArm ROS1/ROS2 3D Vision Robot Arm, with Multimodal AI Model (ChatGPT), AI Voice Interaction and Vision Recognition, Tracking & Sorting

【AI-Driven and Jetson-Powered】Hiwonder JetArm is a high-performance 3D vision robot arm developed for ROS education scenarios. It is equipped with the Jetson Nano, Orin Nano, or Orin NX as the main controller, and is fully compatible with both ROS1 and ROS2. With Python and deep learning frameworks integrated, JetArm is ideal for developing sophisticated AI projects.

【High-Performance AI Robotics】Hiwonder JetArm features 6 intelligent serial bus servos with a torque of 35KG. The robot arm is equipped with a 3D depth camera, a built-in 6-microphone array, and Multimodal AI Large Models, enabling a wide variety of applications, such as 3D spatial grabbing, target tracking, object sorting, scene understanding, and voice control.

【Depth Point Cloud, 3D Scene Flexible Grabbing】JetArm is equipped with a high-performance 3D depth camera. Based on the RGB data, position coordinates and depth information of the target, combined with RGB+D fusion detection, Hiwonder JetArm can realize free grabbing in 3D scene and other AI projects.

【Enhanced Human Robot Interaction Powered by AI】JetArm leverages Multimodal AI Large Models to create an interactive system centered around ChatGPT. Paired with its 3D vision capabilities, JetArm boasts outstanding perception, reasoning, and action abilities, enabling more advanced embodied AI applications and delivering a natural, intuitive human robot interaction experience.

【Advanced Technologies & STEAM Education Tutorials】With JetArm, you will master a broad range of cutting-edge technologies, including ROS development, 3D depth vision, OpenCV, YOLOv11, MediaPipe, AI models, robotic inverse kinematics, MoveIt, Gazebo simulation, and voice interaction. We provide in-depth learning materials and video tutorials to guide you step by step, ensuring you can confidently develop your own AI powered robotic arm.

Hiwonder

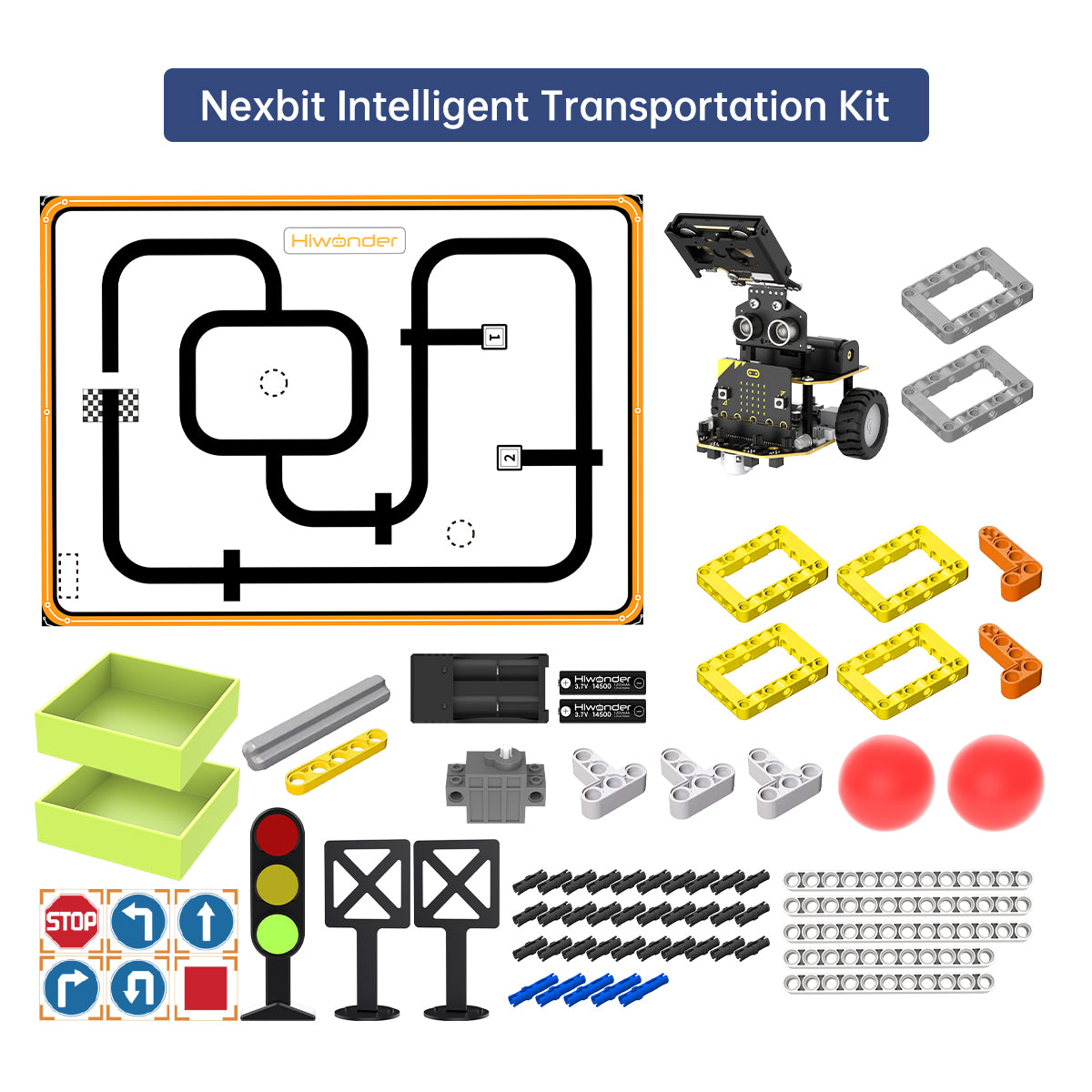

AI Vision Robot Nexbit, micro:bit Programming Educational Robot, Support WonderCam Smart Vision Module

- 【micro:bit Programming, AI Vision Robot】Nexbit is AI smart robot car powered by micro:bit. Nexbit features a high-performance WonderCam AI vision module with an integrated HD camera. This module enables various AI applications, including color recognition, vision line following, target tracking, and more.

- 【Powerful hardware】Nexbit boasts an impressive array of features within its compact body, including a high-precision 4-channel knob line follower, an AI vision module, a glowy ultrasonic sensor, an infrared receiver, RGB lights, and other electronic components.

- 【One-click Training and Learning】Nexbit's AI vision module is integrated with some learning algorithms, allowing it to complete diverse AI vision projects such as waste sorting and tag tracking.

- 【Various Control Methods&Makecode Programming】Nexbit supports Android/ iOS APP control, Handlebit Remote Control.Nexbit also supports Makecode programming.Drag and drop building block-style modules for programming,which is easy to learn.

Hiwonder

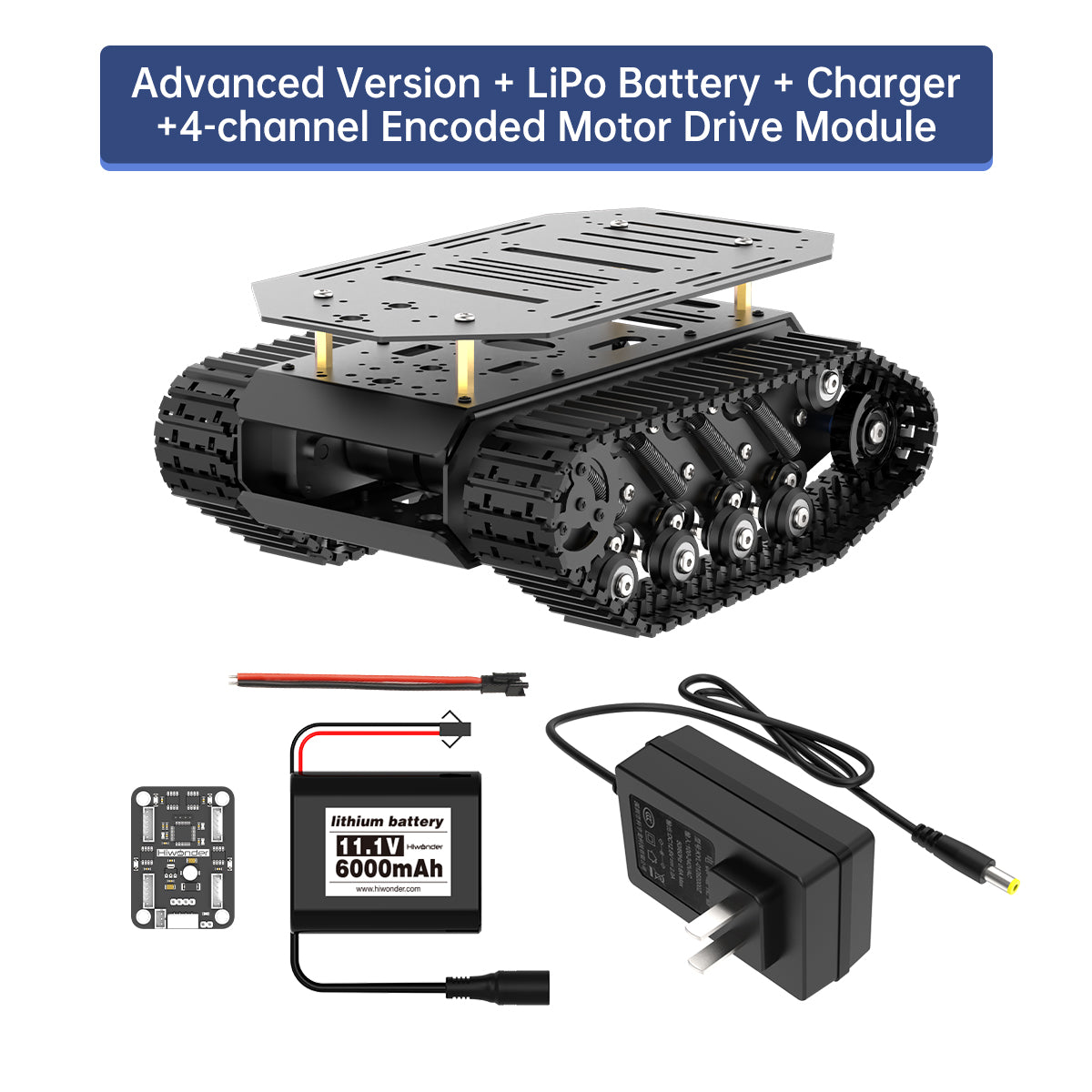

Hiwonder Track Chassis/ Suspension Shock Absorption Full-Metal Tank Robot Encoder Motor/ Smart Car Chassis

【High-quality vibration reduction effect】 The chassis incorporates an 8-channel high-elasticity carbon steel tension spring and is equipped with micro bearings, ensuring agile maneuverability across diverse terrains.

【Strong robot tank bracket】 The main body is crafted from aluminum alloy and undergoes an anodized surface treatment, resulting in an exquisite appearance. The top layer can be easily removed, facilitating DIY development.

【More extended functions】 Bracket contain multiple expansion ports and are fully compatible with popular controllers on the market such as Jetson Nano, Raspberry Pi, Arduino ect. You also can add multiple sensors and servos to create your robot.

【Application】 This it is perfect for hobbyists, educational, competitions and research projects. Many schools or education departments choose this car chassis for school students to learn AI robot knowledge.

【Version Difference】 The standard version is a single layer Track chassis; The advanced version is the double layer Track chassis.

Hiwonder

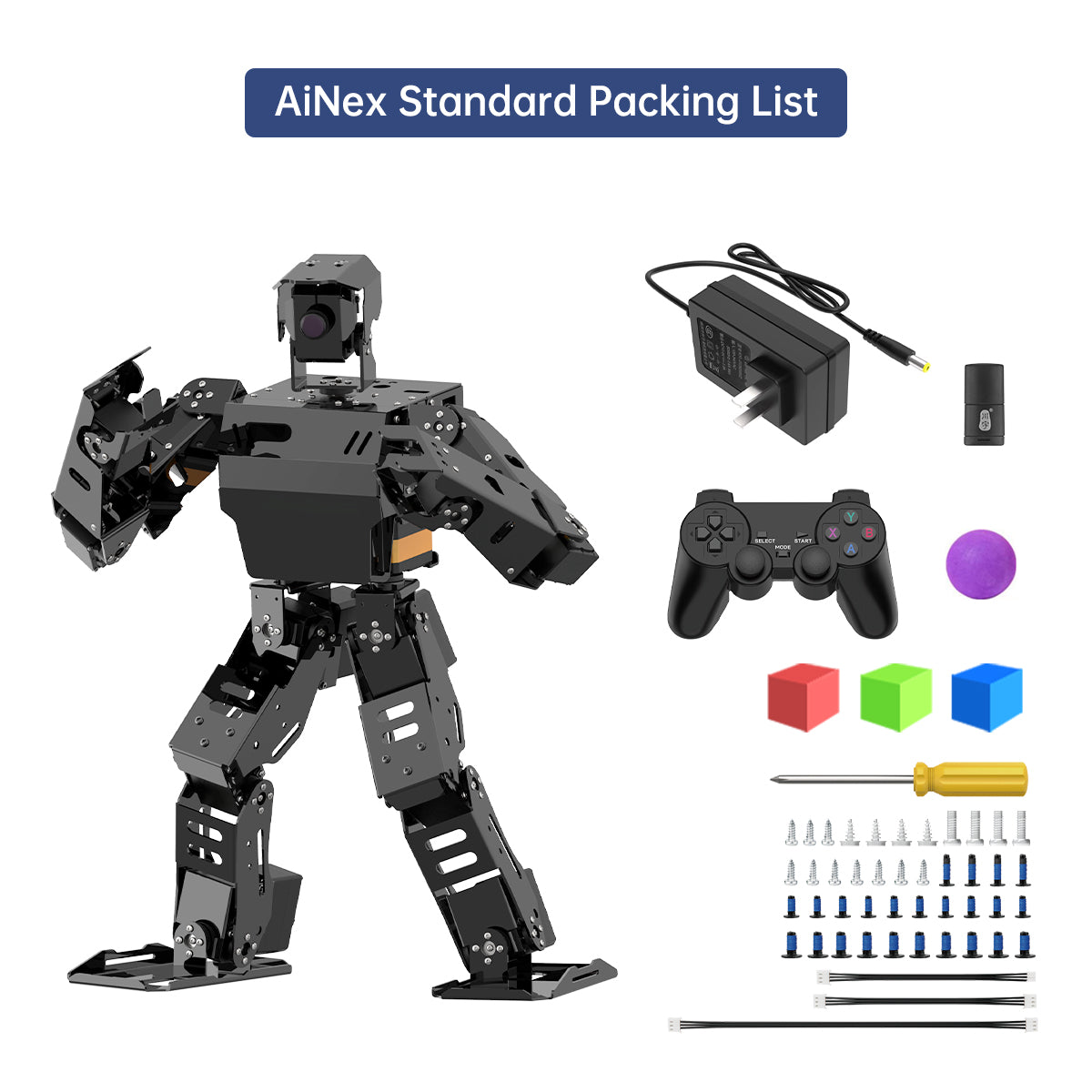

Hiwonder AiNex Biped Humanoid Robot Raspberry Pi Vision AI Kit Powered by ROS Inverse Kinematics Algorithm

【High-performance Hardware Configurations】 Hiwonder AiNex is a professional AI humanoid robot capable of lively mimicking human actions. AiNex robot is developed upon Robot Operating System (ROS) and features a Raspberry Pi, 24 intelligent serial bus servos, an HD camera, movable mechanical hands.

【Advanced Inverse Kinematics Gait】 AiNex smart humanoid robot integrates inverse kinematics algorithm for flexible pose control and gait planning for omnidirectional movement.

【Outstanding Vision AI Recognition and Tracking】 Leveraging machine vision and OpenCV, Hiwonder AiNex excels in precise object recognition and tracking capabilities, ensuring high accuracy & reliability for your AI applications.

【Robot Control Across Platforms】 AiNex provides multiple control methods, like WonderROS app (compatible with iOS and Android system), wireless handle, and PC software.

【Detailed Tutorials and Professional After-sales Service】 AiNex bipedal robot kit offers an extensive collection of tutorials covering up to 18 topics.

Hiwonder

Hiwonder JetAcker AI Robot Kit – NVIDIA Jetson-Powered ROS1/ROS2 Educational Coding Robot with multimodal AI model (ChatGPT), Voice Control, AI Vision Interaction & SLAM

【AI-Driven & Jetson-Powered】 Hiwonder JetAcker is a high-performance ROS robot for STEAM education. Equipped with Jetson Nano/Orin Nano/Orin NX controllers and compatible with both ROS1 and ROS2, JetAcker integrates deep learning frameworks with TensorRT acceleration.

【SLAM Development and Diverse Configuration】Hiwonder JetAcker is equipped with a powerful combination of a 3D depth camera and Lidar. It utilizes a wide range of advanced algorithms including gmapping, hector, karto, cartographer and RRT, enabling precise multi-point navigation, TEB path planning, and dynamic obstacle avoidance. Using 3D vision, JetAcker AI robot can capture point cloud images of the environment to achieve RTAB 3D mapping navigation.

【Al Large Model Empowered, Human Robot Interaction Redefined】 JetAcker deploys multimodal models with ChatGPT at its core, integrating 3D vision and a 6-microphone array. This synergy enhances its perception, reasoning, and actuation capabilities, enabling advanced embodied AI applications and delivering natural, context-aware human robot interaction.

【Classical Ackermann Steering Mechanism】 The Ackermann chassis combines maneuverability and steering precision, facilitating the learning and validation of real-world vehicle steering principles. This design enables realistic simulation of autonomous driving scenarios for enhanced educational experiences.

【STEAM Education Tutorials】 Hiwonder JetAcker's structured curriculum guides users to master cutting-edge technologies including ROS development, SLAM mapping and navigation, 3D depth vision, OpenCV, YOLO26, MediaPipe, Large Al model integration, Movelt and Gazebo simulation, and voice interaction. Supported by extensive documentation and video tutorials, our progressive learning system breaks down complex concepts into digestible modules, guiding you from fundamentals to advanced implementations-empowering you to build your own intelligent robotic systems.

Hiwonder

Hiwonder TurboPi Raspberry Pi Robot Car ROS2 with Mecanum Wheels, AI Vision & Tracking, Multimodal Large AI Model ChatGPT / Gemini / Grok / Llama, and Voice Interaction

【Raspberry Pi 5 & ROS2 Platform】 TurboPi runs on the ROS2 operating system and leverages Python and OpenCV to deliver efficient AI processing and a wide range of robotic applications.

【Multimodal large AI model & Voice Interaction】With an integrated multimodal large AI model and AI voice interaction capabilities, TurboPi supports smart conversations, environment awareness, and flexible task execution for richer human-machine ChatGPT / Gemini / Grok / Llama interactions.

【AI Vision & Autonomous Driving】 Equipped with a 2-DOF HD camera, TurboPi offers FPV video feedback, object and color recognition, line following, and autonomous driving features—perfect for creative AI projects.

【360° Omnidirectional Movement】 Featuring a robust metal chassis and Mecanum wheels, TurboPi can move in any direction and rotate on the spot, adapting smoothly to various scenarios.

【Comprehensive Code & Learning Resources】 We provide full Python source code, diverse experiment examples, and detailed course materials to support your journey in mastering AI and programming while inspiring endless innovation.

Hiwonder

Hiwonder JetTank ROS Robot Tank Powered by Jetson Nano with Lidar Depth Camera Touch Screen, Support SLAM Mapping and Navigation

【Smart ROS Robots Driven by AI】 JetTank supports Robot Operating System (ROS). It leverages mainstream deep learning frameworks, incorporates MediaPipe development, enables YOLO model training. This combination delivers 3D machine vision applications, including autonomous driving, somatosensory interaction and KCF target tracking.

【SLAM Development and Diverse Configuration】 Hiwonder JetTank is equipped with a 3D depth camera and Lidar. It utilizes a wide range of advanced algorithms including gmapping, hector, karto and cartographer, enabling precise multi-point navigation, TEB path planning, and dynamic obstacle avoidance.

【High-performance Hardware Configurations】 JetTank is made of aluminum alloy and employs various hardware components, including reinforced nylon continuous track, 520 Hall encoder gear motors, metal drive wheel, Lidar, Astra Pro Plus depth camera, 6-microphone array, speaker, etc.

【Far-field Voice Interaction】 JetTank advanced kit incorporates a 6-microphone array and speaker allowing for man-robot interaction applications, including Text to Speech conversion, voice wake-up, 360° sound source localization, voice-controlled mapping navigation, etc.

【Robot Control Across Platforms】 JetTank provides multiple control methods, like WonderAi app (iOS&Android), wireless handle, Robot Operating System (ROS) and keyboard, allowing you to control the robot at will.